In 2026, a sitemap.xml file is no longer just a list of links for Googlebot. It has become a knowledge manifest that feeds RAG (Retrieval-Augmented Generation) systems and AI models. Proper sitemap analysis enables not only faster indexing, but above all quality control over the data that ends up in search engines’ vector databases.

Sitemap.xml analysis helps detect:

- Broken URLs — pages returning 404 or redirects

- Missing meta tags — pages without title or description

- Duplicate content — identical titles on different URLs

- Titles too long/short — SERP snippet issues

- Thin content — pages with insufficient content

- Outdated pages — content to remove or update

Traditional Analysis Methods vs Uper SEO Auditor

Screaming Frog

Popular desktop crawler, but:

- Requires installation (Windows/Mac)

- Free version limited to 500 URLs

- Crawls the entire site, not just the sitemap

- No native meta tag analysis from the sitemap level

Google Search Console

Shows indexing status, but:

- No full data export

- Limited meta tag information

- Delayed data (up to several days)

Manual Analysis

You can open sitemap.xml and check URLs one by one. With 50 pages, it takes an hour. With 500 — a whole day.

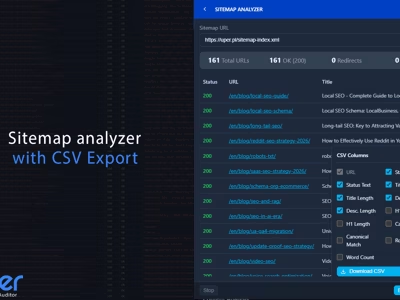

Sitemap Analyzer in Uper SEO Auditor

The UPER SEO Auditor extension includes a built-in sitemap analyzer that works directly in the browser:

- Fetches all URLs from sitemap.xml

- Checks the title and meta description of each page

- Shows character counts and HTTP status codes

- Detects thin content, duplicates, and redirects

- Exports results to CSV

Where Does the Crawler Start?

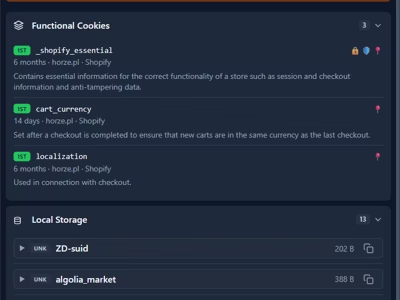

A sitemap is a roadmap. For a crawler (or AI scraper) to find it, it must be declared in the robots.txt file. The Uper SEO Auditor extension automatically parses the robots.txt contents looking for the Sitemap: directive. This is the first test — if the sitemap isn’t declared there, you’re wasting your crawl budget as the crawler is forced to wander through your internal link structure.

You can also enter a custom URL, e.g., /sitemap_index.xml or /news-sitemap.xml.

Sitemap Analyzer in the Chrome side panel with sitemap analysis and a summary of detected issues.

Sitemap Analyzer in the Chrome side panel with sitemap analysis and a summary of detected issues.

Analysis Process and Rate Limiting

After clicking “Analyze,” the extension:

- Fetches sitemap.xml — parses XML and extracts all URLs

- Handles sitemap index — if the sitemap contains links to other sitemaps, fetches them recursively

- Checks each URL — batch processing with rate limiting (5 concurrent requests)

- Extracts meta data — title, description, HTTP status, word count

Server Safety

To avoid overloading the server, the analyzer:

- Sends max 5 requests simultaneously

- Waits 200ms between batches

- Allows stopping the analysis at any time

These safeguards mean you can safely analyze even large websites without risking a server block.

Analysis Results

After completion, you’ll see a table with all URLs:

| Column | Description |

|---|---|

| URL | Full page address |

| Title | Page title (from <title>) |

| Title Length | Title character count |

| Description | Meta description |

| Desc Length | Description character count |

Click a column header to sort results — e.g., by Title Length to quickly find titles that are too short or too long.

The End of Priority and Changefreq (Case Study: WhitePress)

Many SEO specialists still waste time configuring <priority> and <changefreq> parameters. Analyzing the sitemap of WhitePress (4,473 URLs) with the Uper extension clearly shows that these fields are completely ignored by Google today. Crawlers decide on visit frequency based on site authority and content freshness. Focusing on these tags is repeating myths from a decade ago.

WhitePress sitemap audit — all 4,473 URLs bloating the file with deprecated priority and changefreq fields that Google ignores.

WhitePress sitemap audit — all 4,473 URLs bloating the file with deprecated priority and changefreq fields that Google ignores.

Issues Summary: A Technical X-Ray of Your Content (Case Study: Linkhouse)

The Issues Summary module enables rapid detection of semantic errors. Auditing the linkhouse.pl domain (318 URLs), we can see issues that directly affect how AI “understands” your site.

Thin Content (< 300 words)

11 pages (about 3%) have too little content. For AI systems, such pages are useless — they add no value to the knowledge base and may drag down the overall quality score of your site.

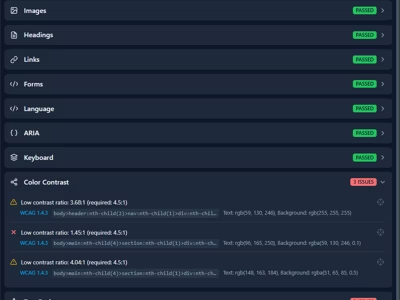

Meta Tag Errors

The audit flagged 140 titles as too long (>60 characters) and 10 pages missing an H1 heading. Without a clear hierarchy, AI scrapers struggle to properly chunk text into fragments.

Redirects (3xx)

24 URLs in the sitemap are redirects. A sitemap should only contain final URLs (200 OK). Submitting redirects wastes Google’s crawling resources.

Issues Summary for linkhouse.pl — 140 overly long titles, 24 redirects, and 11 thin content pages.

Issues Summary for linkhouse.pl — 140 overly long titles, 24 redirects, and 11 thin content pages.

Sitemap Analyzer — Interactive Preview

The “Preview in New Tab” button opens a full audit in a SERP-style layout. Here you can evaluate how your pages appear “through the eyes of a crawler.” Filtering lets you quickly isolate addresses with overly long titles, clearly visible in the detailed WhitePress audit.

Preview mode for Linkhouse — full URL list with titles, descriptions, and link statistics.

Preview mode for Linkhouse — full URL list with titles, descriptions, and link statistics.

WhitePress audit — duplicate page titles that need unique descriptions.

WhitePress audit — duplicate page titles that need unique descriptions.

Extract URLs: From Analysis to Action

One of Uper’s most powerful features is Extract URLs. The tool enables smart data filtering before export. You can, for example, extract only broken links (Broken Links 4xx/5xx) from the WhitePress sitemap and download them as CSV for immediate fixing.

Filtering URLs by HTTP status code — quick extraction of pages with 404 and 301 errors.

Filtering URLs by HTTP status code — quick extraction of pages with 404 and 301 errors.

WhitePress audit — 1,562 duplicate URLs and 1,905 duplicate titles requiring immediate attention.

WhitePress audit — 1,562 duplicate URLs and 1,905 duplicate titles requiring immediate attention.

Try Uper SEO Auditor

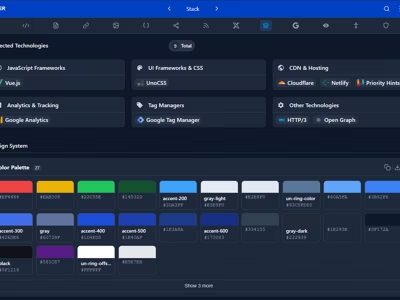

Free Chrome extension that analyzes your sitemap, meta tags, Web Vitals, and dozens of other technical parameters — right in the browser side panel.

Install for free Chrome Web StorePractical Applications of Audit Data

After exporting data to CSV, you can open it in Google Sheets, Microsoft Excel, LibreOffice Calc, or Numbers for in-depth analysis.

URL,Title,Title Length,Description,Description Length

https://example.com/,Example Site - Home,22,Welcome to Example Site,24

https://example.com/about/,About Us | Example,19,Learn about our company,24

https://example.com/contact/,Contact - Example Site,22,,01. SEO Title Audit

Optimal title length is 50-60 characters. In your spreadsheet, use:

=IF(C2<30,"Too short",IF(C2>60,"Too long","OK"))2. Finding Pages Without Description

Filter the “Desc Length” column by value 0. These pages need meta descriptions.

3. Detecting Duplicate Titles

In the spreadsheet, use conditional formatting to highlight duplicate titles:

- Select the Title column

- Format → Conditional Formatting

- Rule: “Custom formula” →

=COUNTIF(B:B,B1)>1

4. URL Structure Analysis

Exported URLs can be split into segments and analyzed:

- Which categories have the most pages?

- How deep is the URL structure?

- Are there unexpected paths?

5. Comparison with Google Index

Compare sitemap URLs with Google Search Console data:

- Export URLs from Sitemap Analyzer

- Export indexed pages from GSC

- Find differences (URLs in sitemap but not in index)

Comparison View — Monitoring Changes Over Time

Re-analyzing the same sitemap activates the Comparison View. This is where you can see change dynamics — what was modified between analyses, with detailed comparison of old and new meta tag values.

For RAG systems, this is a critical content pruning (cleanup) process. If key sections disappeared from the sitemap, the AI system must be notified to remove outdated embeddings and stop generating answers based on content that no longer exists.

Comparison View — comparing changes in descriptions and modification dates between analyses.

Comparison View — comparing changes in descriptions and modification dates between analyses.

Sitemap and Google Search Console

In the “Page indexing” report in GSC, use the “All submitted pages” filter. If you see a large number of URLs that are “discovered but not indexed” — like in the example below, where out of 28.7K known pages only 5.16K are indexed — that’s a red flag.

Google Search Console — over 80% of pages are not indexed. Most common causes: duplicates, redirects, and thin content.

Google Search Console — over 80% of pages are not indexed. Most common causes: duplicates, redirects, and thin content.

The most common issues visible in the GSC report are “Duplicate without user-selected canonical,” “Page with redirect,” and “Crawled — currently not indexed.” Each of these problems can be identified and fixed in Uper SEO Auditor — you’ll find thin content and redirects in Issues Summary, and catch duplicate titles and descriptions in the Preview view.

Advanced Scaling and Multimedia

For large sites, don’t forget about additional sitemap types and scaling techniques.

Sitemap Index

Splitting into multiple files (up to 50,000 URLs each) for better prioritization. This allows crawlers to process large sites more efficiently, and gives you control over the indexing order of individual sections.

Video/Image Sitemap

Providing transcripts and multimedia descriptions for AI visual search. With the evolution of multimodal models like Gemini and GPT-4o, this data is becoming increasingly valuable.

Hreflang in XML

The cleanest way to map language versions without bloating the <head> section. Especially important for large multilingual sites, where hreflang in HTML can significantly increase document size.

Handling Large Sitemaps

Sitemap Analyzer handles large websites:

- Sitemap index — automatically fetches all sub-sitemaps

- Safety limit — max 100,000 URLs

- Stop option — Stop button available at any time

- Partial results — you can export data even after interruption

Tips for Large Websites

- Test on a smaller sitemap — e.g.,

/blog-sitemap.xmlinstead of index - Analyze in parts — divide analysis by categories

- Export regularly — save results before continuing

Checklist: Technical Sitemap Audit

- Declaration: Is the sitemap in robots.txt?

- HTTP Codes: Have you eliminated all 3xx and 4xx (use the filter in Extract URLs)?

- Metadata: Have you fixed “Missing H1” and “Title too long” errors?

- Content: Have thin content pages been expanded or removed from the sitemap?

- Duplicates: Are titles and descriptions unique (use conditional formatting in your spreadsheet)?

- Hygiene: Are you regularly cleaning old data in Cached Data Management to see the current state?

Cache data management — re-analysis reveals changes since the last audit.

Cache data management — re-analysis reveals changes since the last audit.

Summary

Analyzing sitemaps with Uper SEO Auditor using real examples from platforms like Linkhouse and WhitePress proves that even the biggest players need to maintain XML file hygiene. The tool combines advanced diagnostics (Issues Summary, Comparison View) with practical export features that allow further data processing in spreadsheets. In 2026, a clean sitemap is the foundation of visibility in the world of AI algorithms.

Try UPER SEO Auditor and analyze your website’s sitemap.

Sources

-

Google Search Central: Sitemaps overview https://developers.google.com/search/docs/crawling-indexing/sitemaps/overview

-

Google Search Central: Build and submit a sitemap https://developers.google.com/search/docs/crawling-indexing/sitemaps/build-sitemap

-

Sitemaps.org: XML format https://www.sitemaps.org/protocol.html

-

Google Search Console: Sitemap report https://support.google.com/webmasters/answer/7451001